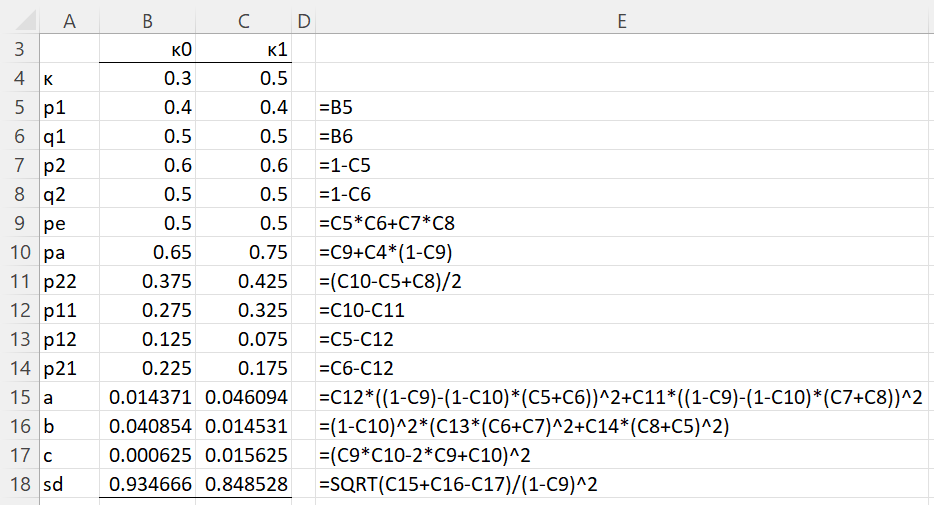

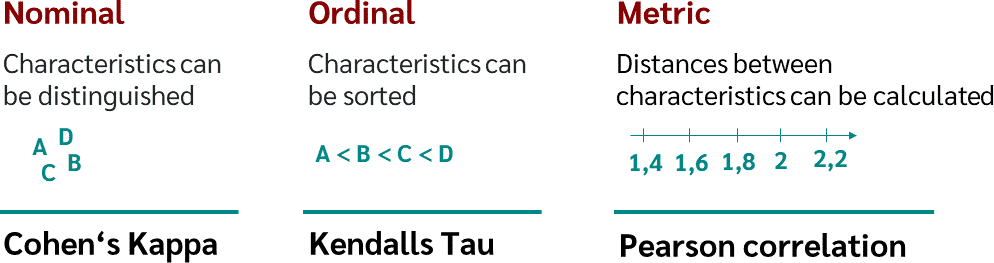

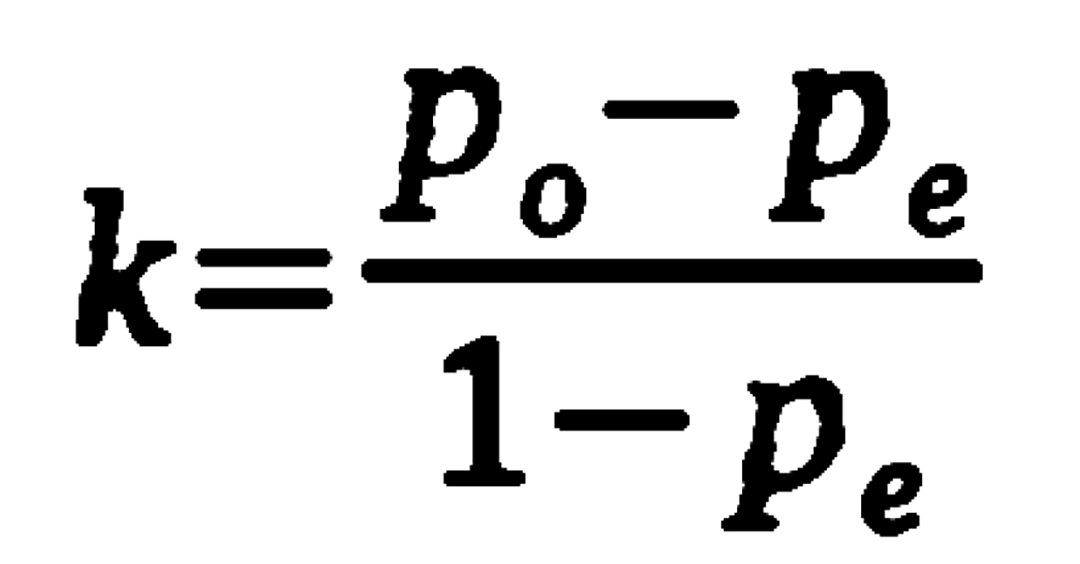

GitHub - thomaspingel/cohens-kappa-matlab: This is a simple implementation of Cohen's Kappa statistic, which measures agreement for two judges for values on a nominal scale. See the Wikipedia entry for a quick overview,

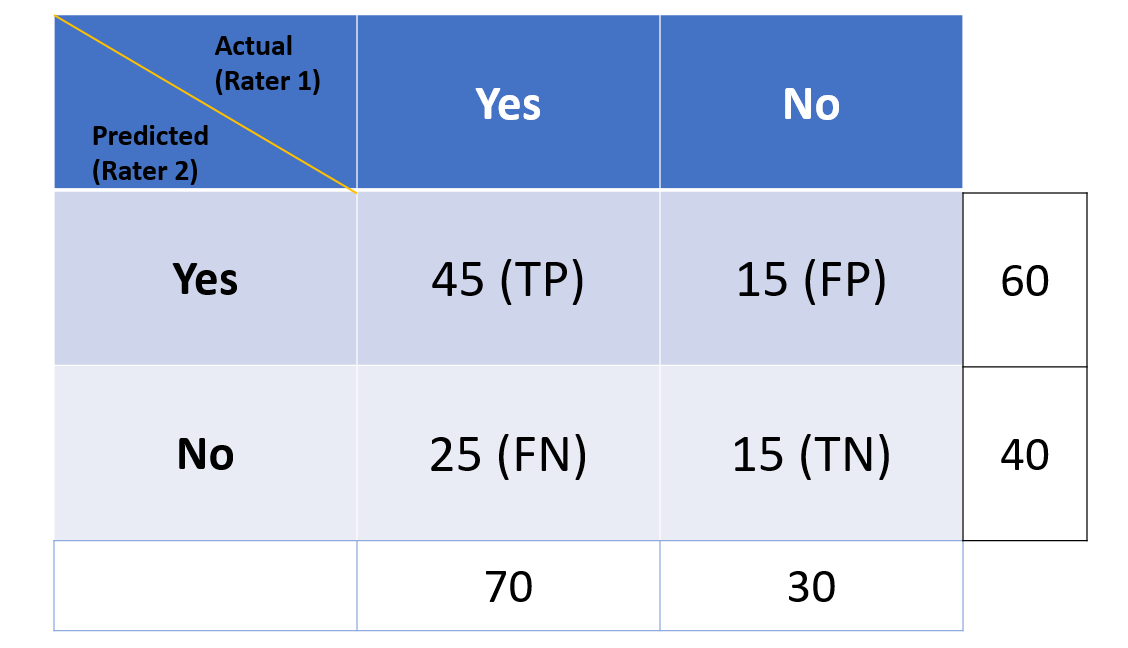

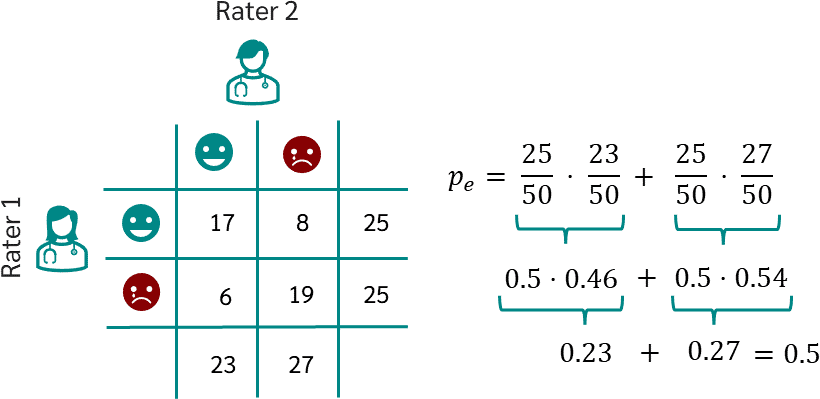

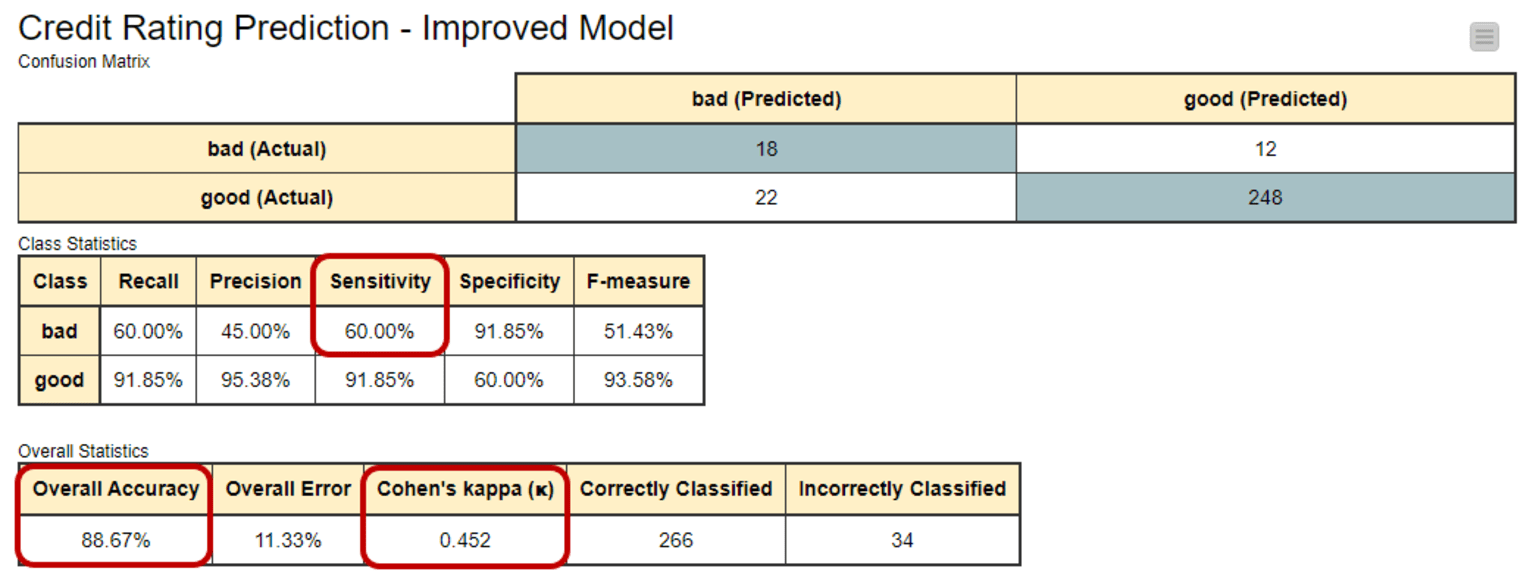

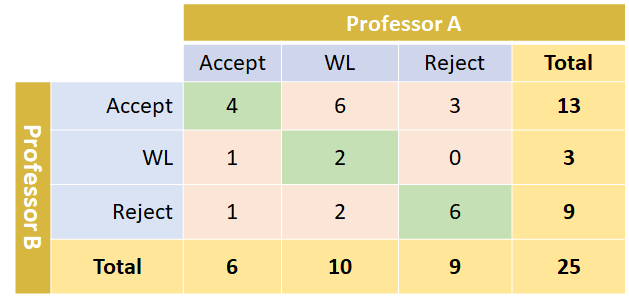

Multi-Class Metrics Made Simple, Part III: the Kappa Score (aka Cohen's Kappa Coefficient) | by Boaz Shmueli | Towards Data Science

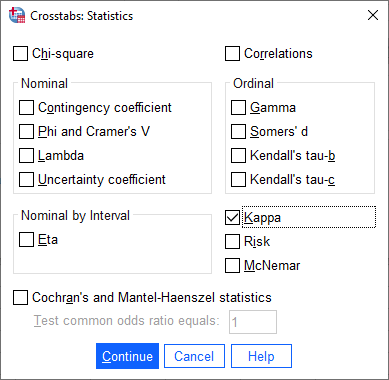

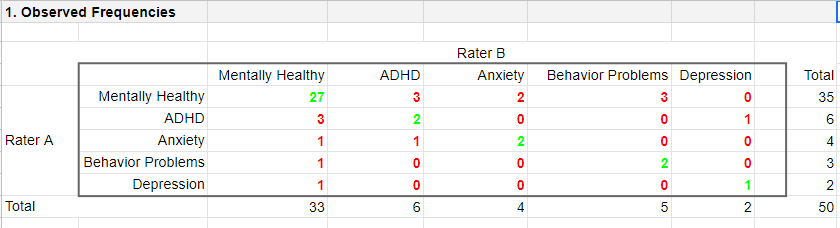

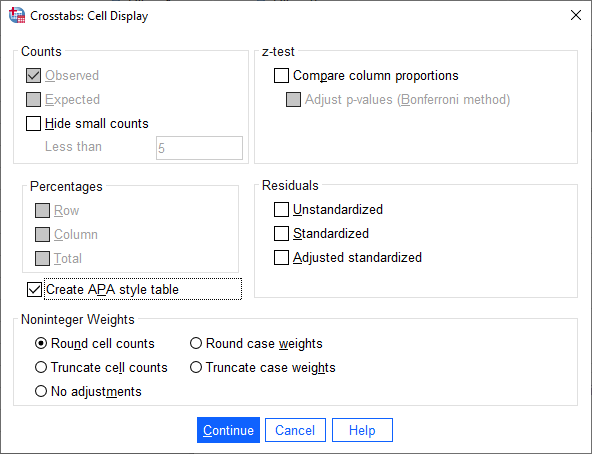

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of the output using a relevant example | Laerd Statistics